Native, Open Source macOS app for benchmarking local LLMs with real-time hardware telemetry - Anubis OSS

I built a free, open-source macOS app for benchmarking local LLMs on Apple Silicon. It correlates real-time hardware telemetry (GPU/CPU power, frequency, memory, thermals via IOReport) with inference performance, with exportable and stored benchmark results - something I couldn’t find in any existing tool. Extra are was taken to make the app and inferences light-weight- the performance hit with metrics on and off was negligible after extensive tuning - but I only have my lowly 24GB M4 Air to test on. Help me make it better for the community!

What it does

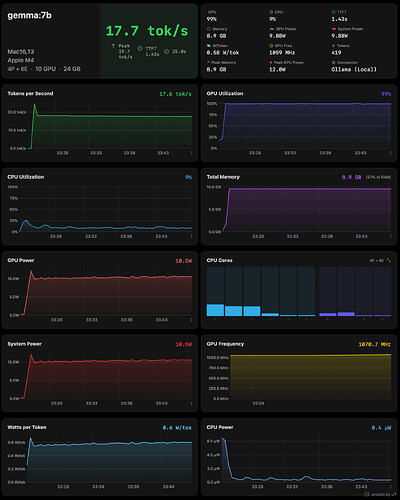

- Real-time metrics - tok/s, GPU/CPU utilization, power consumption (watts), GPU frequency, and memory - all charted live during inference

- Any backend - works with Ollama,

mlx_lm.server, LM Studio, vLLM, LocalAI, or any OpenAI-compatible endpoint - A/B Arena - compare two models side-by-side with the same prompt and vote on a winner

- History & Export - session history with full replay, CSV export, and one-click image export for sharing results

- Process monitoring - auto-detects backend processes and tracks their actual memory footprint (including Metal/GPU allocations)

Why I hope this might be useful for the HF community

- Quantization comparisons - comparing Q4_K_M vs Q8_0 vs FP16 on your hardware? Anubis shows the actual power/performance tradeoff - not just tok/s but watts-per-token

- MLX users - works with

mlx_lm.serverout of the box. Just start the server and add it as an OpenAI-compatible backend - Model cards & benchmarks - if you’re publishing benchmarks for the community, the image export gives you shareable, branded results with one click

- Apple Silicon insights - per-core CPU utilization, GPU frequency, ANE/DRAM power - hardware data that no chat wrapper or CLI tool surfaces

Links

| GitHub |

| Download |

| Requirements |

| License |

Looking for feedback

I’d especially love to hear from anyone running MLX or GGUF models locally:

- What metrics matter most to you?

- What backends should I prioritize?

- What would make this useful for your workflow?

Open an issue or start a discussion on the repo. If Anubis is useful to you, consider buying me a coffee ![]()